Before you spend money on AI tools, a consultant, or a training program, answer one question: is your business ready?

"Ready" doesn't mean "sophisticated." It means your business has the raw material that AI needs to add value: processes people can describe, data the team can trust, at least one person willing to learn new tools, a small budget to spend on them, and a culture where somebody is allowed to say "let's try it."

Missing any of those and you'll get the same result a hundred other small businesses have gotten: a dozen unused logins, a frustrated team, and a founder who thinks "AI just doesn't work for us." That's not an AI problem. It's a readiness problem, and it's fixable.

This is the readiness assessment I run with clients before I take on any implementation work. It's five dimensions, each scored 1 to 5, total of 25 points. You can run it yourself in about an hour. At the end you'll know exactly where you stand, what to fix first, and whether to start a pilot this week or spend a month cleaning up before you do.

Why a readiness assessment matters

Two years of watching small-business AI rollouts has taught me the same lesson over and over: the bottleneck is almost never the AI.

The bottleneck is that nobody can describe how a workflow runs. Or that customer data lives in five places and none of them agree. Or that the team has been told "we should be using AI" by a founder who hasn't used it themselves. Or that the budget was set assuming AI is free because ChatGPT has a free tier.

AI is not a magic layer you add to a broken business. It's a force multiplier on whatever you already do. If your processes are sharp, AI makes them sharper. If they're fuzzy, AI multiplies the fuzz.

A readiness assessment catches this before you spend the money. It's cheap (one afternoon), it's honest (the score is the score), and it gives you the exact order of fixes. When I skip it with a client, the first two weeks of the engagement become a forced assessment anyway. Better to do it up front and save the money.

The five dimensions

Every dimension gets a 1 to 5 score. The dimensions are:

- Process -- how clearly the team can describe how work gets done.

- Data -- whether the information AI would need is accessible and trustworthy.

- Team -- whether anyone on the team has time, interest, or skill to adopt new tools.

- Budget -- whether the business can afford real tools, not just free tiers.

- Culture -- whether leadership will let a pilot happen without committee approval.

These five together cover 95% of what determines whether a pilot will succeed. Miss one, and the best AI tools in the world can't save you.

Dimension 1: process (score 1-5)

What it measures: How well your team can describe, in specific steps, the work that happens in your business every week.

Score 1 -- No documented processes. Nobody can walk through what happens on an average Monday. The work "just happens" and when somebody leaves, their replacement relearns everything from scratch.

Score 2 -- A few processes are in somebody's head. You have a couple of key workflows that one person has figured out. They could describe them out loud, but nothing is written down. Losing the right person would be a 3-month recovery.

Score 3 -- Some documented workflows, mostly for customer-facing work. You have a written onboarding flow, or a support SOP, or a sales script. Not everything. Internal operations are still improvised.

Score 4 -- Most repetitive workflows documented, updated in the last six months. The team can point to a doc for any of the top 10 workflows. People use the docs instead of letting them gather dust.

Score 5 -- Process-first business. Every repetitive task has an owner, a documented flow, and a metric. New hires are trained from docs, not from shadowing. Changes to processes are deliberate and tracked.

Why it matters for AI: AI tools need specific instructions. If you can't describe the workflow to a human, you can't describe it to Claude or ChatGPT either. A score of 1 or 2 in Process means your first AI project should not be a pilot. It should be two weeks of interviewing your own team and writing down what they do.

Dimension 2: data (score 1-5)

What it measures: Whether the data AI needs to do its job is real, accessible, and trustworthy.

Score 1 -- Data chaos. Customer info in seven spreadsheets, three inboxes, and a notebook. Nobody knows which version is the current one. "Which CRM do we use?" gets three different answers.

Score 2 -- One primary system, but it's half-full. You have a CRM, but only the founder's accounts are up to date. Or you have analytics, but nobody trusts the numbers.

Score 3 -- A working system of record for customers, but ops data is scattered. Your CRM is usable, but financial data, ops data, and project data live in other places that don't talk to each other.

Score 4 -- Centralized customer data and most ops data is structured. You can pull a list of your top 50 customers with their contact info, status, and last interaction in under 5 minutes. Same for deals, projects, or whatever your core object is.

Score 5 -- Data warehouse mindset. You have a single source of truth for each type of record. Tools read from and write to the right places. When something gets out of sync, somebody notices within a day.

Why it matters for AI: The single fastest way to make an AI workflow fail is to feed it dirty data. A cold email campaign with 30% invalid addresses looks like an AI failure when it's a CRM hygiene failure. A support bot that gives wrong answers is a knowledge base problem, not a bot problem. Score 1 or 2 on Data means fix the data first -- even one weekend of cleanup will move you up a level and make every subsequent AI project 10x more useful.

Dimension 3: team (score 1-5)

What it measures: Whether you have anyone on the team with the time, interest, or skill to own new AI tools after a consultant (or your future self) leaves.

Score 1 -- Nobody is interested. The team is fully loaded with existing work and hostile or indifferent to new tools. Previous tool rollouts failed because nobody adopted them.

Score 2 -- One curious person, no time. You have at least one team member who uses ChatGPT casually, but they have no bandwidth to take on something new during the workday.

Score 3 -- One curious person with an hour a day. Same as above, but the founder or ops lead is willing to carve out time for that person to learn and own one new workflow.

Score 4 -- Two or three people already experimenting. Multiple team members use AI tools unofficially. They trade prompts. They've built at least one shortcut that saves time.

Score 5 -- AI is already part of daily work for most people. The team uses Claude or ChatGPT every day. Someone on the team has built internal automations. New tool rollouts take days, not months.

Why it matters for AI: Tools don't implement themselves. They need an owner who uses them every day until the workflow becomes habit. Score 1 on Team means hire or reassign first. Score 2 means build the habit before you build the workflow (give the curious person one hour a day, no other change, for two weeks).

Dimension 4: budget (score 1-5)

What it measures: Whether the business can spend on real tools and, if needed, on outside help.

Score 1 -- Free tier only. No budget line for software beyond existing subscriptions. Every new tool has to justify itself at zero dollars.

Score 2 -- Under $100/month for new AI tools. You can swing a Claude Pro or ChatGPT Plus for one person. That's it.

Score 3 -- $100 to $500/month. You can build the lean stack: Claude Pro, ChatGPT Plus, Perplexity, one automation tool, one image tool. This is where most small businesses should live.

Score 4 -- $500 to $2,000/month plus one-time projects. You can run the full stack for 5 to 10 people plus occasional outside help for a pilot.

Score 5 -- Real AI budget. There's a line item for AI tools and advisory. New tools get approved without a three-meeting debate. A good pilot that proves itself can scale to the whole team the same month.

Why it matters for AI: Free tiers are fine for testing. They're not fine for running a business. You will hit rate limits at the worst time, lose work because a session expired, or get stuck on an older model. A team trying to run production workflows on the free tier is a team that's going to bounce off AI and blame the tools. Score 1 or 2 on Budget means either start with the smallest possible workflow (one person, one tool) or raise the budget before you try.

Dimension 5: culture (score 1-5)

What it measures: Whether leadership will let experiments happen, reward owners, and tolerate small failures.

Score 1 -- No experiments allowed. Every new tool needs a committee. Every failure is a career risk. Innovation is "somebody else's job."

Score 2 -- Experiments are tolerated but not supported. The team can try things on their own time. Nothing formal. No budget. No recognition for wins.

Score 3 -- The founder will approve a pilot. If a team member proposes a specific pilot with a clear scope, leadership will say yes within a week. A failed pilot is not a career problem.

Score 4 -- Pilots are encouraged and resourced. The founder actively asks "what can we pilot next quarter" and gives the owner time and budget to try.

Score 5 -- Experiment-first culture. Small bets are the default. People talk openly about what didn't work. Wins are copied across the company fast.

Why it matters for AI: AI pilots fail and succeed at different rates than traditional projects. A 30% failure rate on individual pilots is normal and fine -- you learn from each one and move on. In a culture where a 30% failure rate is "unacceptable," people won't propose pilots, and the few that get approved will be low-ambition "safe" projects that never deliver the 10x wins. Score 1 on Culture means the problem is above your pay grade -- talk to the founder before you talk to a consultant.

The scorecard

Score each dimension 1 to 5. Add them up.

| Dimension | Score |

|---|---|

| Process | __ /5 |

| Data | __ /5 |

| Team | __ /5 |

| Budget | __ /5 |

| Culture | __ /5 |

| Total | __ /25 |

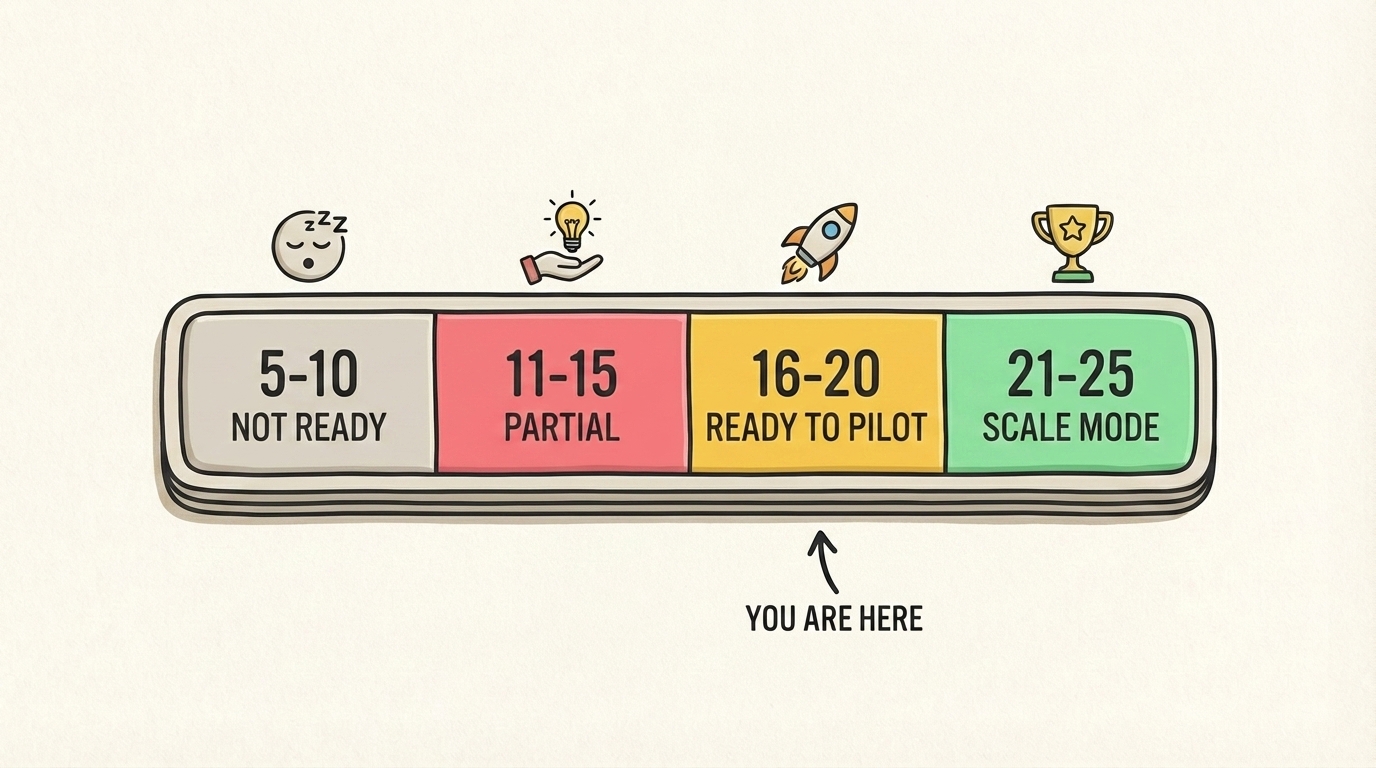

What your score means

5 to 10: not ready yet

You're not ready for an AI project. That's a reality check that saves you money and frustration, not a judgment.

The problem: One or more core dimensions (usually Process or Data) are at a 1. Any AI tool you buy right now will get rejected by the organism. This is the state where I politely decline engagements, because I'd be taking money for work that wouldn't stick.

The fix: Spend 30 to 60 days working on the lowest-scoring dimension. Not all of them. Just the lowest.

- Process at 1-2: Interview the team. Write down what each person does in a normal week. One page per person is enough. This alone will move you from a 1 to a 3 in Process and often surface wins that don't need AI at all.

- Data at 1-2: Pick one system (your CRM or your customer database) and spend a week cleaning it up. Delete dead records. Merge duplicates. Standardize fields. You don't need a data project -- you need a tidy weekend.

- Team at 1: Hire or reassign. If nobody on your team will ever own new tools, no framework will help you. AI is not your first problem.

- Budget at 1: Raise the ceiling to $100/month, or accept that you're building at free-tier speed.

- Culture at 1: Start with one person running one workflow off the books. If leadership isn't onboard, a formal pilot will die. An informal one can build the case.

Come back and retake the assessment in 30 days.

11 to 15: partial readiness

You can start, but you should start small and be honest about the weak dimension.

The pattern: Usually 3s across the board or one 4 balanced by a 1 or 2. The business works. There are clear workflows and a willing team, but one of the five dimensions will fight you.

The fix: Run one tightly-scoped pilot on the strongest dimension while you fix the weakest one in the background.

- If Process and Team are strong but Data is weak, pick a workflow that doesn't rely heavily on clean data. Content drafting, meeting notes, research summarization -- all safe.

- If Data and Team are strong but Process is weak, start by using AI to document the processes. Interview the team, feed the transcripts to Claude, have it write the first draft of an SOP. You turn your weakness into your first workflow.

- If Budget is the weak one, use free tiers for the pilot. Only upgrade the one seat for the owner. Prove value before spending more.

At 11-15 points, your first pilot is a learning exercise. The goal is less "save 10 hours a week" and more "prove that AI can work here at all, so leadership feels comfortable investing in the next one." Aim for a small, fast, boring win.

16 to 20: ready to pilot

You're in the sweet spot for an AI assessment or a self-run first workflow.

The pattern: Mostly 4s with maybe one 3. A real business with real processes, real data, a willing team, enough budget, and a founder who will let an experiment happen. This is the state I see most often in the 10-50 person businesses I advise.

The fix: Pick your highest-impact repetitive workflow and run the four-phase implementation loop. You can do this yourself in 90 days or bring in an advisor to compress it into 4 to 6 weeks.

At 16-20, you don't need a readiness fix -- you need execution discipline. The risk is not that your pilots will fail, it's that you'll start five of them at once and finish none. Pick one. Ship it. Then pick the next.

21 to 25: scale mode

You're past pilot territory. You don't need a consultant to run your first project -- you need one (or an internal ops lead) to keep you from sprawling.

The pattern: Mostly 5s. Process, data, and team are all strong. Budget and culture support experimentation. This is usually a series A-ish SaaS company, a well-run services business over 30 people, or a founder-led team that's already been doing things right for years.

The fix: Stop doing one-off pilots and start building internal capability. Hire or promote one person who owns "AI systems" as part of their role. Build a small internal library of prompts, patterns, and tools that other teams can reuse. Set a rule: every new workflow starts with "can AI do part of this?" as a real question, not a formality.

At 21-25, the risk isn't failure -- it's dilution. You can now afford to try a lot of things, which means you can also afford to never finish any of them. The discipline to ship and measure one workflow at a time matters more at this stage than at any other, because the cost of drifting is higher.

A real example

One of my clients last year is a 22-person B2B services agency. Their scores and what we did:

- Process: 3 (customer-facing workflows documented, ops was a mess)

- Data: 2 (CRM half-full, project data scattered)

- Team: 4 (two curious people, one with bandwidth)

- Budget: 4 ($800/month AI budget approved)

- Culture: 4 (founder actively asked for pilots)

- Total: 17

They were ready, but the weak link was data. Their customer records were in HubSpot, but project data lived in three Google Sheets and a Notion database. If we'd tried to build a "smart client status dashboard" as the first pilot, it would have failed -- the data wasn't clean enough to feed an AI.

Instead, the first pilot was a weekly sales recap email for the founder. The input was HubSpot deal data (which was clean), the output was a 500-word summary with wins, risks, and suggested next steps. Total setup time: three hours. Time saved per week: about two and a half hours of the founder's time. Not huge, but a real win, shipped in week one.

We used that win to justify two weeks of data cleanup across the Google Sheets and Notion. Once the data was trustworthy, pilot two was a project status report generator -- the workflow that was originally the dream state. It shipped in week six, saved about eight hours a week across the team, and became the template for three more workflows in the months after.

This is the pattern. You don't fix your weakest dimension before you start. You pick a pilot that works despite your weakness, then use that win to buy permission to fix the weak dimension. Then your next pilot is the big one.

When to run this assessment yourself vs. bring in help

If you can answer the 25 questions honestly in an hour with one other leader, run it yourself. The scorecard isn't hard. The hard part is being honest about the score. If you think you're a 4 on Data because "we have a CRM" -- you're probably a 2. Ask the person who uses the CRM every day, not the person who bought it.

Bring in an outside advisor for the assessment if any of these apply:

- The leadership team disagrees about the scores and you want an outside tiebreaker

- You've run an informal version of this before and the team pushed back on the findings

- You're planning to spend over $50,000 on AI this year and want an independent sanity check

- You want a written report you can hand to the board

An outside assessment should take about a week and cost between $2,000 and $5,000. It should produce a one-page scorecard, a one-page findings summary, and a prioritized list of what to fix first. If somebody quotes $25,000 for "AI readiness," you're paying for slides again.

What to do with the result

However you run the assessment, do this after:

- Write the score down where the team can see it.

- Commit to one thing to fix in the next 30 days, based on your weakest dimension.

- Pick your pilot workflow only after the fix is in motion.

- Retake the assessment in 90 days.

The 90-day retake is the part people skip. Do it anyway. You'll see your scores move and know whether the fixes are working.

Getting help

If you want me to run the assessment with your leadership team and produce the scorecard, book a 2-week AI audit. The first week is the readiness assessment and opportunity map. The second week is the first pilot. You end up with a real score, a fixed weak dimension, and a working workflow -- all inside 14 days.

If you'd rather run it yourself, use this post as the template. You can also read my guide to implementing AI in a small business, see what small-business AI consulting looks like, or grab the AI starter kit with the prompts I use every week.

The assessment is the cheapest thing you can do before committing money to AI. One hour of honest scoring saves months of "why isn't this working?" Run it, write down the number, and use it to decide what to do next.